The discussion about AI in education has changed quickly. What began as small experiments is now influencing district budgets, purchasing decisions, and long-term teaching plans. Schools are no longer debating if AI belongs in classrooms. Instead, they are focused on how to use it responsibly, effectively, and in ways that can be measured.

In 2026, decisions about using AI are shaped by policies, equity goals, privacy rules, and the need for proof of results. Schools that see AI as a long-term investment, not just a trend, are seeing real improvements in efficiency, personalized learning, and support for teachers. This guide turns federal advice, market trends, and school needs into a practical plan for buyers, developers, and investors.

What Is AI in Education

AI in education means using smart systems that look at data, find patterns, and help with teaching or administrative decisions in schools. Unlike simple digital tools, these systems can adjust content, provide insights, automate tasks, and help teachers make decisions.

Modern AI-powered education systems include:

- Adaptive learning platforms

- AI-powered tutoring systems

- Automated assessment tools

- Predictive analytics for student performance

- Teacher workflow automation assistants

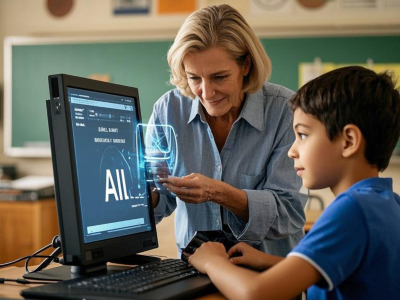

Importantly, guidance from the U.S. Department of Education emphasizes that these systems must remain human-centered. AI should assist, not replace, educators. At the same time, as AI in education continues to expand rapidly as a global market, driven by rising EdTech investment, digital transformation initiatives, and demand for personalized learning, policy frameworks are reinforcing responsible growth. AI should assist, not replace, educators.

Why AI in Education Is a Strategic Priority in 2026

Policies are becoming more stable. Purchasing teams are getting better at their jobs. Expectations for using technology responsibly are clearer than before. Reports say schools should focus on:

- Human oversight

- Privacy and data protection

- Equity and accessibility

- Transparent system design

- Evidence-based evaluation

This advice makes it clear that using AI in schools needs strict rules and oversight, not just enthusiasm. For school leaders, this means linking AI use to results that can be measured in teaching. For vendors, it means making sure their products are easy to understand and meet all requirements.

The Four Market Forces Shaping AI in Education Adoption

Understanding these main factors will help determine which tools will succeed and which will not.

1. Teacher-in-the-Loop Automation

Most AI tools in education are made to support teachers, not replace them. These systems act as extra help, but teachers still make the key decisions about:

- Pacing

- Assessment decisions

- Content selection

- Intervention strategies

The best platforms help teachers save time, offer resources for different needs, and point out useful information from student data. Tools that make big teaching decisions without being clear about how they work will not be accepted.

2. Adaptive Learning with Equity at the Core

Equity metrics are becoming embedded in procurement standards. Adaptive learning systems must support:

- English learners

- Students with disabilities

- Multiple learning modalities

- Accessibility standards

Using AI responsibly in schools means checking for bias, using a wide range of data, and keeping records of fairness checks. More school districts now ask vendors to prove their systems work for all student groups before making large purchases.

3. Trust, Transparency, and Student Data Privacy

Trust has become a procurement requirement. Leaders evaluating AI-enabled learning now ask:

- What student data is collected

- How long is it retained

- Who has access

- How is bias mitigated

- Can outputs be explained clearly

Vendors who clearly explain their models, provide transparency reports, and show how they reduce bias can sell their products more quickly. Systems that are hard to understand are being replaced by those that are easy to use and explain.

4. Evidence-Driven Scaling

In 2026, schools will start with short trial periods before expanding AI learning programs. Successful districts:

- Launch small pilots

- Define success metrics in advance

- Collect formative and summative data

- Incorporate educator feedback

- Scale only when measurable progress is documented

This approach links research with real-world practice, follows federal advice, and builds trust among everyone involved.

A Practical Implementation Blueprint for AI in Education

To make sure technology spending leads to better teaching, schools should use a four-step process. Define Instructional Objectives Before Technology.

AI in education adoption should begin with clear goals, such as:

- Reducing teacher workload

- Increasing personalized learning

- Improving literacy outcomes

- Enhancing formative assessment

Establish governance guardrails:

- Data minimization policies

- Privacy protections

- Bias mitigation requirements

- Human oversight mandates

Schools should plan carefully before buying new technology.

Strengthen Procurement Standards

RFPs for AI in education tools should require:

- Model cards and documentation

- Human override controls

- Accessibility compliance verification

- Built-in evaluation dashboards

- Clear impact metrics

When buying AI tools, schools should clearly state responsible AI practices and how they will protect student data privacy

Invest in Professional Learning

Technology adoption fails without educator capacity. Districts implementing AI-driven education effectively allocate budget toward:

- Ongoing professional development

- Data literacy training

- Ethical AI usage guidelines

- Prompt design and evaluation skills

- Error detection and critical review

One-time workshops are not enough to help teachers keep using new tools. Ongoing support leads to lasting benefits.

Measure, Refine, and Scale

Evidence determines sustainability. Before expanding AI in the education district-wide:

- Analyze student achievement metrics

- Assess teacher time savings

- Review bias or error reports

- Gather stakeholder feedback

- Adjust implementation strategies

Expanding without clear evidence brings financial and reputational risks.

2026 Product Checklist for Responsible AI in Education

Whether building or buying, use this checklist:

- Human Oversight: Teachers remain in control of pacing, grading, and instructional adjustments.

- Accessibility and Universal Design: Support multimodal input, assistive technologies, and inclusive interfaces.

- Bias Mitigation: Document dataset sources, conduct fairness reviews, and maintain reporting channels.

- Data Protection: Minimize data collection. Provide encryption, secure storage, and transparent retention timelines.

- Explainability: Offer age-appropriate explanations of outputs and confidence levels.

- Embedded Evaluation: Include analytics dashboards that measure impact on learning outcomes.

- Workflow Integration: Make sure AI tools for education make work easier, not harder

Products that follow these standards are more likely to get approved by schools.

Budgeting for Sustainable AI in Education Growth

Financial planning should reflect responsible implementation.

- Allocate Funds for Training: Dedicate a percentage of license costs toward educator development.

- Build Small Innovation Labs: Pilot AI-driven education in controlled environments before large contracts.

- Embed Data Governance in Contracts: Negotiate privacy auditing, bias review protocols, and compliance clauses upfront.

Careful budgeting shows that a school is committed to the long term.

Common Pitfalls in AI in Education Implementation

Avoid these high-risk mistakes:

- Autopilot Instruction: Never delegate high-stakes decisions entirely to automated systems.

- Unclear Data Flows: Families deserve transparency regarding student data privacy and retention.

- Insufficient Professional Development: If teachers do not get ongoing training, fewer will use the new tools, and trust will decrease.

Market Outlook: Where AI in Education Is Headed

The next wave of AI-driven education will focus on:

- Teacher augmentation tools

- Adaptive learning ecosystems

- Real-time feedback engines

- AI-powered tutoring assistants

- Evidence-based impact measurement

Generic chatbot tools will become less popular unless they show clear learning results and have strong safety measures. Investors and vendors who focus on safety, fairness, and proven results will do better than others.

The Bottom Line: Turning Policy into Measurable Impact

AI in education is no longer experimental; it is a strategic infrastructure. The path forward is clear:

- Lead with instructional goals

- Embed governance and transparency

- Invest in educator capacity

- Demand measurable outcomes

- Scale responsibly

When used thoughtfully, AI in education helps keep the human connections that make learning meaningful. Schools that combine new technology with strong policies, evidence, and fairness will get faster approvals, smoother rollouts, and better academic results in 2026 and beyond.